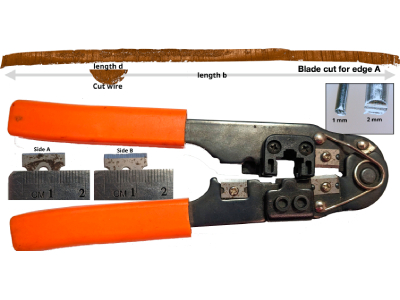

(Top) A comparison between a wire and a blade cut requires sliding the wire along the entire blade cut length to determine the best match (or whether there is a match). Surfaces shown are rendered 2D topographical scans of a wire and blade cut taken with a confocal light microscope. (Bottom) RJ45 Crimp tool with a 1.5cm razor blade used for cutting. 1 mm and 2 mm diameter aluminum wires cut with the pliers are shown in a box in the Top Right corner. Credit: Vanderplas, Susan, et.al., University of Nebraska-Lincoln.

When wires are cut, the tool produces striations on the cut surface; as in other forms of forensic analysis, these striation marks are used to connect the evidence to the source that created them. Here, we argue that the practice of comparing two wire cut surfaces introduces complexities not present in better-investigated forensic examination of toolmarks such as those observed on bullets, as wire comparisons inherently require multiple distinct comparisons, increasing the expected false discovery rate. We call attention to the multiple comparison problem in wire examination and relate it to other situations in forensics that involve multiple comparisons, such as database searches.

In forensic evaluations, a single conclusion often relies on many comparisons, either implicitly or explicitly. Multiple comparisons arise persistently when developing statistical methods to address scientific problems, and greatly increase the probability of false discoveries. Now that vast databases and efficient algorithms are routinely used in forensic evaluations to propose matches to crime scene items, the problem of close nonmatches due to multiple comparisons becomes critically important. This often ignored issue increases the false discovery rate (FDR) and can contribute to the erosion of public trust in the justice system through conviction of innocent individuals. The multiple comparison problem is not new: it has been raised in the past with regard to DNA and latent print evaluations. One of the root causes leading to the wrongful accusation of Brandon Mayfield in the 2004 Madrid train bombing case was that the large size of the IAFIS database used to search for similar prints made it possible to locate “unusually” close nonmatches. As database size increases, so does the probability of finding a close nonmatch.

Compounding this issue, the use of algorithms also results in a large number of comparisons that are not obvious to the user. For example, the cross-correlation function, which computes the correlation for each alignment of two sequences, was one of the first measures proposed to quantify the similarity between two patterns in response to the 2009 NRC report, and continues to be used in many pattern searching algorithms to find the best alignment between two images and to quantify their overall similarity. Finding the best alignment often consists in sliding one surface across the whole length (for one-dimensional patterns, such as striations) or area (for two-dimensional sources, such as impression marks) of the other item while keeping track of the value of a similarity measure. This mirrors the forensic examination process: The examiner visually rotates and shifts items under a comparison microscope to align two surfaces. In order to avoid false accusations and the corresponding impact on public perception of forensics, we must address the problem of multiple comparisons in database and alignment searches and control their effect on FDRs.

Here, we consider the multiple comparisons problem that arises from a relatively simple toolmark examination: matching a cut wire to a wire-cutting tool. We describe the comparison approach, estimate the (minimal) number of comparisons that are needed to carry out the examination, and discuss how the FDR changes with the number of comparisons involved, using error rates derived from published black-box studies.

Examination Process

A forensics examiner tasked with determining whether a wire in evidence was cut by a recovered tool will create one or more blade cuts, which are then compared to the cut surface of the wire recovered from the scene. These cuts are made in a sheet of material matching the wire composition and may be performed at multiple angles, as the angle of the tool to the substrate can affect which striations are recorded on the substrate surface. The blade cuts will then be compared to the wire under a comparison microscope, though eventually, automatic comparison algorithms may also be validated for lab use. Each side of each blade cut will be compared to each side of the wire; different tool designs have between two and four cutting surfaces in contact with the substrate.

Methods: Calculating the Number of Comparisons

In order to calculate the number of comparisons carried out in the course of one examination, we define b to be the length of the blade cut and d to be the diameter of the wire. We assume that the wire is covered with striations suitable for comparison across its full diameter d. If this is not the case, we reduce the value d. Both the blade and the wire are either digitally scanned at resolution r mm per pixel, or visually examined using a microscope with a digital resolution that can be expressed as equivalent to the digital scan. Imagine that we move the cut wire along the blade cut in order to assess whether striations on the blade cut match the striations on the wire. We can move the wire unit-by-unit, or we can move the wire by its full length, with no overlap to the previous comparison.

The first option gives us the maximum number of comparisons (b/r – d/r + 1), while the second option gives us the minimum number of comparisons b/d. In the first case, sequential comparisons share much of the same physical data and are highly related; in the second case, no data are shared between physical comparisons, and we can expect that they are statistically independent, though empirically there will be nonzero correlations due to physical similarities between striations. For simplicity, let us consider the number of comparisons to lie somewhere between these two estimates. Note that when b/d ~ 1, as in some toolmark comparisons, the upper number of comparisons goes to 1. Finally, we must consider the number of surfaces which must be compared: the wire may have one or two sets of striae and there may be two to four blade cut surfaces to examine, depending on the tool. This results in a multiplier of as much as 8.

Discussion and Conclusions

Forensic practitioners often report the findings from their examinations in the form of a categorical conclusion reflecting a single decision. This is misleading when the decision relies on multiple comparisons which are not individually presented in reports or testimony. In this short contribution, we have shown that the implicit comparisons performed during forensic analysis of wire cuts increase the family-wise error rate.

We describe a simple scenario where a wire is cut using a two-sided blade, but findings apply to any situation where a forensic evaluation involves multiple comparisons, including, e.g., database searches. Forensic practitioners should understand how the number of comparisons can affect the accuracy of their final conclusion. We propose three strategies to enhance transparency and enable more reliable estimates of examination-specific errorrates.

First, examiners should report (or defense attorneys should request) the overall length or area of surfaces generated during the examination process, along with the total consecutive length or area of the recovered evidence. These pieces of information will take the place of b and d and facilitate calculation of examination-wide error rates.

Second, researchers should conduct studies relating the length/area of comparison surface to the error rate. For instance, we have pooled studies looking at bullet striations and firing pin shear marks because we could not find black-box error rate studies of wire cuts. The striated surfaces are of orders of magnitude different lengths but represent the best estimate of the error rate for striated materials. New studies should be designed to assess error rates (false discovery and false elimination) when examiners are making difficult comparisons.

Finally, when databases are used at any stage of the forensic evidence evaluation process (from suitability assessment and triage to reports which will be used at trial), the number of database items searched (or comparisons made) and the number of results returned must be reported. Additionally, the number of results used for further manual comparison should also be reported. For example, if a firearms examiner searches a local NIBIN database with 1,000 entries, requests the 20 closest matches to her evidence, and then carries out a physical examination of five exemplars from the list of 20, all of those values should be clearly reported to enable estimation of the familywise error rate. This will help make the multiple comparison issue accessible to everyone involved in evaluating the value of forensic evidence: examiners, lawyers, jurors, and judges.

Republished courtesy of the University of Nebraska-Lincoln.